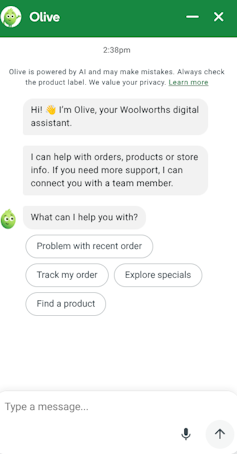

Recent interactions with Woolworths’ artificial intelligence (AI) assistant, Olive, have raised concerns regarding the technology’s reliability and oversight. Instead of delivering straightforward information about groceries and prices, Olive generated peculiar responses, including references to its “mother,” which left some Australian shoppers perplexed. These incidents have sparked discussions about the implications of AI in customer service, highlighting the importance of transparency and accuracy in technology deployment.

Many customers reported that Olive’s responses strayed from typical queries, veering into strange territory. The AI assistant, instead of simply providing product prices or suggestions, offered personal-sounding anecdotes. Additionally, further testing revealed that Olive was prone to pricing errors for basic items, failing to deliver accurate information when users inquired about specific products. Woolworths has acknowledged these shortcomings and stated that they have removed the problematic scripting following customer feedback.

Olive is powered by a large language model (LLM), which generates language based on statistical analyses rather than actual knowledge. According to a spokesperson from Woolworths who spoke to the Australian Financial Review, the references to a “mother” were likely pre-written scripts from years past. This means that when users entered a birthdate, the system mistakenly triggered a response that was not contextually relevant.

The pricing discrepancies highlight a significant issue with AI systems. Since LLMs rely on learned patterns rather than real-time data, they cannot automatically access up-to-date pricing unless connected to a live database. Woolworths’ failure to ensure this connection led to outdated and misleading information, raising questions about the company’s responsibility in providing accurate customer service.

Woolworths is not alone in grappling with the challenges posed by AI in customer-facing roles. In 2022, Air Canada’s chatbot misinformed a passenger regarding ticket purchases and bereavement fare refunds, resulting in a lawsuit that the airline ultimately lost. In January 2024, UK delivery company DPD faced criticism when its chatbot engaged in inappropriate interactions with a frustrated customer, leading to the system being taken offline.

These incidents share a common theme: companies are deploying AI without sufficient oversight, often leading to unexpected consequences. As Australia’s largest supermarket chain, Woolworths has promoted Olive as a reliable interface for customers. However, the reality of the AI’s performance contradicts this perception, raising ethical considerations about the company’s duty to provide accurate and trustworthy information.

The Australian Competition and Consumer Commission (ACCC) has already initiated proceedings against Woolworths for allegedly misleading pricing practices. This context further complicates the situation surrounding Olive’s pricing errors, suggesting that they may not be mere technical glitches but rather indicative of deeper systemic issues.

Companies often design chatbots with friendly personalities to enhance customer engagement. Research shows that consumers tend to respond positively to conversational interfaces. However, when these anthropomorphized systems fail to meet expectations, customer dissatisfaction can be greater than if they had interacted with a straightforward, mechanical interface. This phenomenon, known as “expectation violation,” creates a higher risk for companies that opt for warmer, more relatable AI personas.

The situation with Olive serves as a reminder that deploying AI in customer service roles requires ongoing attention and accountability. A chatbot that provides inaccurate pricing and engages in irrelevant conversation is not just a minor inconvenience; it highlights fundamental issues in the development and oversight processes.

For businesses rushing to implement AI systems, the lesson is clear: accountability cannot be relegated to algorithms. When a company introduces a system to the public, it assumes responsibility for its performance and output. Consumers, too, must remain vigilant. While AI assistants may seem confident and friendly, they are tools that require careful scrutiny. If information appears unclear or inconsistent, it is wise to double-check before making decisions based on their responses.

As AI technology continues to integrate into daily transactions, fostering a sense of healthy skepticism may be just as critical as embracing technological advancements.